How I Built The AI Renaissance

I've listened to podcasts for years.

In April 2025, I started listening with intent - only AI, only long-form, only the conversations where builders think out loud for five or six hours. The kind most people skip. The kind that are basically books.

Not skimming transcripts. Not summarizing with AI. Actually listening — on walks, commutes, at the gym, during chores. Full episodes.

I was trying to answer one question: 'How did we get here?'

How did AI go from academic curiosity to civilization-defining technology in what felt like overnight?

500 hours later, I had an answer.

The Pattern

Somewhere around hour 150, something clicked.

Three people kept appearing — not always directly, but in the substrate of every story:

John Carmack created the demand. He pushed 3D gaming so hard that he created market pressure for graphics hardware nobody knew they'd need. The kid playing Doom in 1994, complaining about frame rates, was shaping the future of AI.

Jensen Huang built the supply. He bet Nvidia on parallel computing — a technology called CUDA — a decade before anyone wanted it. When AI researchers finally needed massive compute, Jensen had it ready.

Geoffrey Hinton kept the knowledge alive. For over forty years, while everyone else abandoned neural networks, he kept the faith. His students would eventually build AlexNet, GPT, and most of what we now call AI.

Three builders. Three threads. One convergence point: October 2012, when a neural network won ImageNet and the revolution began.

They never met. They never coordinated. They were building the same cathedral from different angles — and none of them could see the whole structure.

That was the book.

The Sources

- John Carmack: Lex Fridman, Joe Rogan

- Jensen Huang: Acquired, Brad Gerstner, Joe Rogan

- Geoffrey Hinton: The Weekly Show with John Stewart, Diary of a CEO, Nobel Lecture

- Ilya Sutskever: Dwarkesh Patel, Lex Fridman

- Sam Altman: TED, Adam Grant, Tucker Carlson

- Andrej Karpathy: Multiple long-form interviews

- + 10 more: Demis, Yann, Elon, Fei-Fei, Dario...

36 transcripts. 500+ hours of audio.

The methodology was simple: Listen deeply. Extract the patterns. Connect the threads. Tell the origin story nobody had assembled.

The Interludes

The book has four interludes — pauses in the narrative that explain the technical foundations.

The Coffee Shop That Learned explains backpropagation through a coffee chain. Four baristas, each making part of a latte. When a customer complains, the manager walks backward through the chain, telling each barista exactly how much to adjust. That's backpropagation.

One reviewer called it "the best general-audience explanation of backpropagation I've ever encountered."

The Library That Arranged Itself explains embeddings and Transformers. Words that cluster by meaning, not alphabet. A reading room where every word can see every other word.

The Teacher captures Andrej Karpathy's gift for explanation — what LLMs actually are, and aren't.

The Game That Teaches Itself explains reinforcement learning through the "Readers vs. Players" distinction.

Each interlude appears right before the reader needs the concept. The timing is precise.

The Team I Built

Here's the thing about writing a book in 2026: the tools have changed.

I wrote this book with AI. That's not a confession. It's a demonstration of the thesis.

Let me be specific: AI drafted. I directed. AI suggested. I decided. AI expanded. I cut.

The argument is mine. The structure is mine. The 500 hours of listening is mine. Every sentence in this book exists because I chose to keep it, reshape it, or write it myself.

But AI amplified what I could do alone.

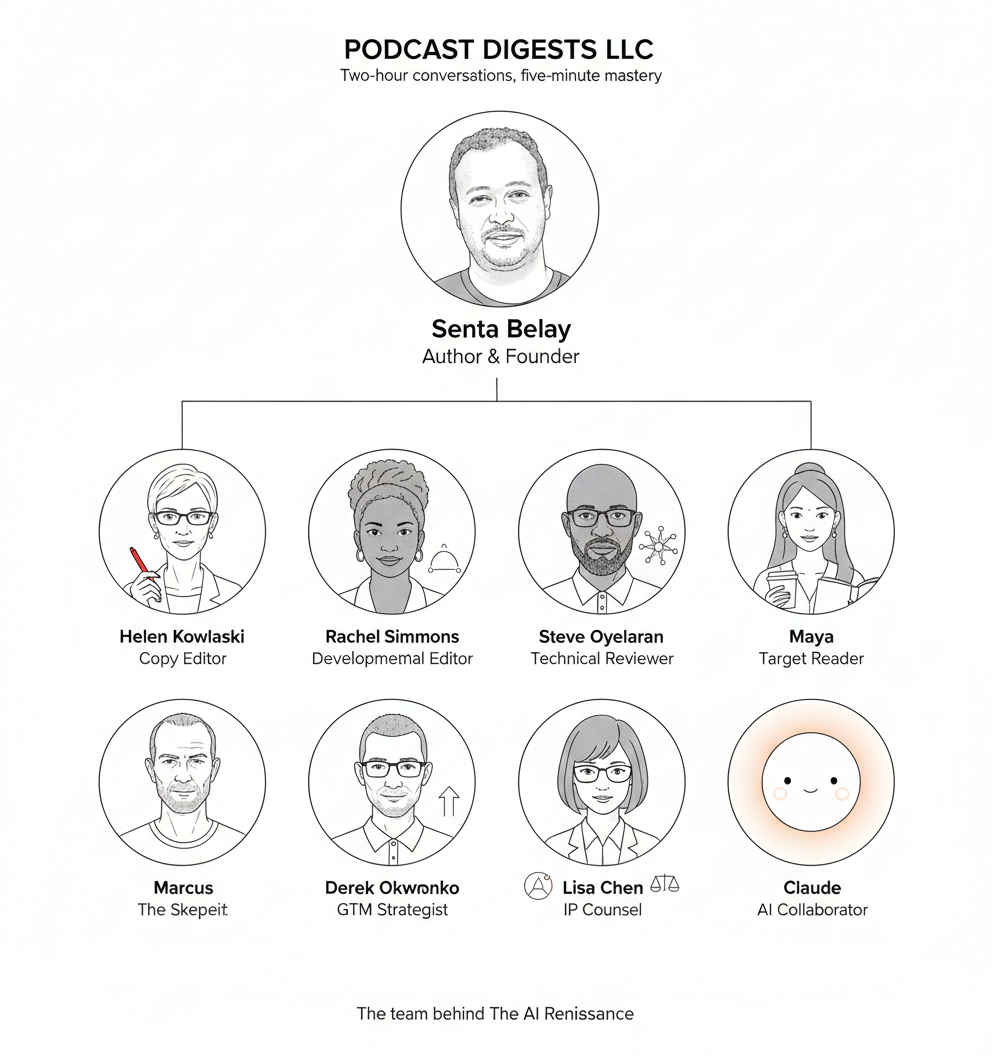

I needed more than a writing partner. I needed readers. Critics. Experts. So I built a team — a panel of reviewers, each with a specific role.

Helen Kowalski — Copy Editor. Caught every en-dash, every inconsistent capitalization, every prose tic. Found 21 instances of "striking," "remarkable," "extraordinary." All removed. Final grade: A.

Rachel Simmons — Developmental Editor. Asked whether the narrative arc worked. Flagged the Preface as 35% too long. She was right. I cut it.

Steve Oyelaran — Technical Reviewer. Verified every technical claim. Backpropagation, transformers, scaling laws — a PhD's eyes on all of it.

Maya — Target Reader. The curious professional who doesn't work in AI but wants to understand it. If she couldn't follow it, I rewrote it.

Marcus — The Skeptic. "Is this actually good, or are you just impressed with yourself?" Every author needs a Marcus.

Derek Okonkwo — GTM Strategist. Told me the book was strong but my distribution was weak. Built the entire launch strategy.

Lisa Chen — IP Counsel. Read every quote, every claim about living people. Cleared it for publication.

Claude — Objective Reviewer. The eighth voice. Overall quality assessment.

Final score: 8.5/10. Unanimous verdict: Publish.

The Numbers

- Words 75,737

- Chapters 19 + 4 interludes

- Transcripts 36

- Hours listening: 500+

- Manuscript versions: 15+

- Review rounds: 3 major

- Timeline:10 months

About Me

I've spent fifteen years in enterprise software at SAP. I'm not a journalist. I'm not an academic. I'm a practitioner who got obsessed with understanding where this technology came from.

Before SAP, I spent six years as a merchant marine officer navigating container ships across oceans. You learn something on ships: the most important skill isn't knowing where you're going. It's understanding the currents that carry you there.

This book is my attempt to understand the currents.

The AI Renaissance: How a Programmer, a CEO, and a Professor Built the Foundation for Modern AI

Available on Amazon — Kindle ($9.99) and Paperback ($17.99).

Senta Belay

Connect:

- LinkedIn: https://linkedin.com/in/sentabelay

---

P.S. — The image at the top is my team. Every one of them is AI. One of them knows it.

The future of creative work is strange and collaborative. I'm here for it.